Human beings aren’t just getting greedier, but stupider.

That’s according to Professor Stephen Hawking: and, really, it doesn’t seem like a particularly shocking statement or commentary.

Just simple observation seems to indicate a rapid stupidification process going on all over the place, for a whole host of reasons and manifesting in a whole bunch of different ways.

You see it in society. You see it in people. You see in politicians and political discourse. You see it all across social media. You see in the entertainment industries. You see it in the White House. I’m not even entirely sure when it started to happen – but we appear to be fast-heading towards the comedy-Dystopian future envisioned in the cult movie Idiocracy.

For anyone who’s never seen Idiocracy, it depicts a future in which mankind is so stupid that when a time-displaced person from the present-day gets stuck there he immediately becomes the most intelligent person on the planet – a saviour figure who becomes the source of all decisions and guidance for a human society that has lost all of its intellectual capacity.

Professor Hawking might’ve been being tongue-in-cheek with his own observations – he did have a wry sense of humour, as evidenced by his cult contributions to things like Futurama, The Simpsons, The Big Bang Theory and Star Trek: The Next Generation. But there’s also no doubt that he was making a serious point too.

In an interview with Larry King on the terrific Larry King Now talk show last year (which airs on RT in the UK), Professor Hawking talked about the increasing greediness and stupidity being the biggest threats to humanity’s survival, arguing that human beings are becoming stupider and greedier by the day.

And that this is going to push humanity towards extinction-level crises earlier than once predicted.

Some of his commentary related to the presidency of Donald Trump and in particular to the US withdrawal from the Paris Climate Agreement, but he was also focused on more pervasive things like the levels of air pollution that most people are regularly exposed to.

Hawking had in recent years, particularly the last year, become something of a prophet of doom, to the extent that he was even starting to get lightly mocked for his frequent warnings of catastrophe. His various warnings had also drawn argument or criticism from various other experts in the respective fields that the warnings related to.

It is possible that, as he advanced in age and perhaps became even more conscious of his mortality, Professor Hawking felt an increasing sense of urgency in speaking about possible or likely existential dangers to the human race or the planet.

Certainly, given some of the less cautious and more gung-ho elements of various scientific fields (particularly in relation to the rapid progression of AI, as well as areas like cyber warfare), there is something to be said for Professor Hawking’s more cautious commentary in an era that could be described as being a dangerous crossroads for the human race.

No doubt, Stephen Hawking wasn’t omnipotent – meaning he can always be wrong or just overreacting.

But science needs high-profile voices providing warning.

Or even sometimes objection. Just as the likes of Albert Einstein, Eugene Wigner and Leó Szilárd, were all early opponents of nuclear weapons: indeed, even Robert Oppenheimer, the ‘father of the bomb’, almost immediately regretted his contribution to war and destructive-capability, and – within two months – was already calling for a total ban on all nuclear weapons development.

And yet we’ve been trying, without success, to pressure world powers to get rid of nuclear weapons ever since – but it appears there’s no going back. That’s why it’s important for warnings and concerns to be registered ahead of time and not merely after the fact.

Hawking argued that sheer human greed would impede us from dealing with global warming or other environmental problems; while rapidly advancing technology and digitisation of human activity would also create new existential threats to add to the existing threats of nuclear weapons, over-population and resource scarcity, etc.

Recently Hawking proposed human beings may only have as little as 100 years left on the planet. He cited climate change, epidemics and population growth as being major contributors to a revised doomsday clock, but also cited extra-terrestrial threats such as asteroid strikes (which he said we are overdue).

In 2006, Hawking asked “In a world that is in chaos politically, socially and environmentally, how can the human race sustain another 100 years?”

Again, some balk at Hawking’s commentary, particularly the 100 years prediction.

But his view of the human race’s situation seems to have become so grim that he was advocating the human race’s escape from the planet Earth – that mankind should begin moving into space as soon as possible to provide the option for a degree of human survival even if Earth-based civilisation itself doesn’t survive.

He warned that if humans don’t grow into an inter-planetary race soon and settle on other worlds, our species could die out within the next century.

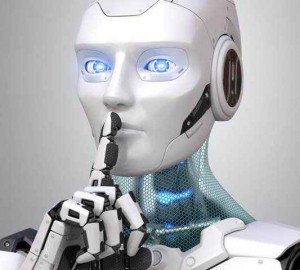

Last year, Professor Hawking was very forthright with his warnings about the development of artificial intelligence and robots, warning that AI is going to quickly reach a point at which it will become a new form of life, entirely capable of outgrowing and outperforming human beings – with the likelihood that it might one day seek to replace us entirely.

“I fear that AI may replace humans altogether,” he said. “If people design computer viruses, someone will design AI that improves and replicates itself.”

Whether the professor was envisioning a scenario like in The Matrix movie isn’t clear – though that kind of eventuality is certainly one way to interpret his commentary. In 2014, he said that AI was our “worst mistake in history”.

As in The Matrix movie mythology, the idea is that advanced AI could completely outsmart us before we’ve even figured out what’s going on. “One can imagine such technology outsmarting financial markets, out-inventing human researchers, out-manipulating human leaders,” he said, “and developing weapons we cannot even understand…”

He also put his name to a letter by the Future of Life Institute, calling for a prohibition against the development of autonomous weapons that are “beyond meaningful human control”. The fear, shared by numerous scientists and experts who shared his apprehension, is that we’re not far away at all from the development of autonomous systems (militarised AI or robotic warfare) in battlefield scenarios – a development described by some as the ‘Third Revolution in Warfare’, the first two being the invention of gunpowder and the invention of nuclear weapons.

In the last couple of years, Hawking also drew a lot of attention for warning about the dangers of contact with extra-terrestrial intelligences, warning that we – as a species – should be wary of seeking out alien races, who could easily be hostile. More than that, he argued that intelligent or advanced alien civilisations would not think much of us, as we would be a primitive people to them. “If aliens visit us, I think the outcome would be much as when Columbus landed in America,” he said, during the Into the Universe series on the Discovery Channel, “which didn’t turn out well for the Native Americans.”

He noted elsewhere, “If you look at history, contact between humans and less intelligent organisms have often been disastrous from their point of view, and encounters between civilisations with advanced versus primitive technologies have gone badly for the less advanced.”

Some of Professor Hawking’s recent statements or warnings had not been well received by various commentators or researchers and in some cases he was accused of fearmongering, apeing cliched science-fiction ideas, or even attention-seeking.

However, it could just as easily be argued that he was providing a counter-balance – after all, Stephen Hawking was hardly a Luddite or an anti-scientific mind, so his warnings are not motivated by the same things that motivate most of the anti-science trends that currently proliferate on conspiracy-based online commentary in particular.

Science needs dissent and argument – without that, it descends into fixed dogmas and becomes more like religion.

I have also noticed that Hawking has been singled out by conspiracy enthusiasts as somehow being an ‘agent of the New World Order’ (but, really, who isn’t?). Their argument for this is in some of the things Hawking was coming out with in recent years, such as talking about climate change, talking about over-population, advocating mankind’s movement into space, or even seemingly advocating a ‘one-world government’.

I don’t buy any of that, however. Over-population is a thing – there’s no point in labelling anyone who mentions it as being an ‘agent of the new world order’. And I personally think a one-world-government is probably inevitable and might even some day be necessary: I woudn’t want or trust our current, dodgy-as-fuck networks of political organisations and globalist bodies to create or oversee that move to one-world-government, but the emergence – one day in the future – of some form of global government seems inevitable.

It’s just a question of whether it’s going to be an oppressive, unhealthy one or something better; and, obviously, a question of who is controlling its establishment and whether they’re doing it in a way that is likely to benefit all of common humanity or a way that will benefit only a select section of the population.

In other words, I’m saying it isn’t the principle of one-world government that’s a problem – it’s the issue of what the reality of it will be that’s the problem.

However, while it’s impossible to know whether he was wrong or right in specific predictions (we won’t be able to know for a while yet), some of the general warning is hard to argue with.

That we need to be highly circumspect and cautious about advancing Artificial Intelligence should be obvious – but a number of AI enthusiasts were non-plussed with Hawking’s sentiments.

I would suggest that the combination of increasingly dumbed-down human societies and increasingly advanced AI would make it seem almost inevitable that humans – flawed, emotional, greedy, fat, temperamental – will eventually cede more and more agency and responsibility to the more efficient intelligence of AI.

If that ends up being the trajectory, then we would conceivably end up with our entire fate resting entirely in the hands of AI. From that point on, The Matrix scenario could become much more likely.

It isn’t much of a leap – just think about how dependent we already are on the Internet, computers and mobile phones. And then think how dependent we might one day be on much more advanced and all-encompassing AI.

It isn’t even necessarily about AI turning malevolent or anything of the sort: it could be seen purely in terms of evolution, where human beings become redundant. Shit, AI might even decide it is better for the health of the planet than human beings are – and that might even end up being the case, particularly if the environmental problems continue or escalate.

In terms of our own survival as the dominant species of the planet, the key would have to be finding – and carefully maintaining – some kind of agreed-upon equilibrium between retaining human agency and developing AI, where we avoid reaching the point where we are entirely dependent on AI. I think that’s the gist of what Hawking was warning about: the problem is, if our total dependency on the Internet (in the space of a mere 15 years or so) is anything to go by, that’s precisely what is destined to go wrong – we WILL end up entirely dependent on AI.

On Hawking’s view on the dangers of alien contact, I have always thought that it’s incredibly dangerous to court potential extra-terrestrial powers or races – because any ET power capable of interstellar travel is, by necessity, technologically superior to us and would view us as primitive. And, as Hawking points out himself, if the history of human societies and interactions is anything to go by, advanced societies have a habit of abusing less-advanced societies.

His Native-American analogy seems apt: I would also suggest that a truly advanced ET power might view us the way the British Empire viewed India.

In fact, I wonder if Hawking’s warnings about ET contact were not just targeted at the mainstream scientific community or organisations like SETI, but at those engaged on the more occult side of things too, as well as the massive community of Ancient Astronaut Theory enthusiasts. One of the things that has always stuck out like a sore thumb to me in regard to the centerpiece of Ancient Astronaut theory – specifically the mysteries of the ‘Annunaki’ at Sumer (Ancient Iraq) – is that the Sumerian testimony seems to suggest that human beings were envisioned as worker-drones (or slaves) for the allegedly extra-terrestrial race that established city-states in Iraq.

I don’t at all dismiss the Ancient Astronaut theories (particularly in regard to Sumer, for which the evidence seems very solid): but I find people’s enthusiasm for the ‘space gods’ very odd, given that the Sumerian record seems to suggest a less-than-idyllic state of affairs.

That’s not what Professor Hawking was referring to in his warnings (I assume); but given some of the contemporary obsession with the ‘return of the space gods’, it’s all related.

In terms of establishing contact between mankind and some as-yet-unknown alien civilisation, it is entirely 50/50 as to whether that civilisation would be benevolent or malevolent. This image here, by the way, is from the 1960s’ Star Trek episode ‘The Corbomite Manuever’ – that alien’s face always scared the crap out of me.

In terms of Professor Hawking advocating our migration from the planet and our becoming a space-faring civilisation, that seems like it has to inevitably be a later stage in our evolution (whether or not it has anything to do with the planet becoming untenable for us). There is already well-founded suspicion that we may already be a space-faring civilisation – and that a secret space-programme has been in operation for some time.

When I ponder the possibility, however, of mankind expanding into space and getting off-planet, there are causes for concern. It might be tempting to immediately envision it as some idyllic Star Trek situation where a peaceful, unified human civilisation ventures forth into space: but, again, given our track record, it might just as easily end up looking more like the movie Elysium – in which the wealthy elites live in luxury out in space, while the vast mass of lower-class humanity is left to slug it out and fight for scraps in abject conditions on the Earth.

But Hawking wasn’t wrong to think that people seem to be getting more stupid with each passing day – and getting conspicuously close to the world depicted in Idiocracy. How much of that is by engineering (as in the belief held by some that we are being deliberately dumbed down via what we eat, what we watch on TV and how we’re goaded into viewing the world, etc) and how much of it is just our own fault is hard to say.

There have actually been a couple of studies published in recent years purporting to demonstrate a diminishing level of general intelligence, one of the theories being that clever people are generally having less babies and less-clever people are generally having more: meaning that, over time, the results are going to be problematic for societies.

Although with notions of cleverness and stupidity, it gets very tricky and very subjective: as that kind of argument doesn’t account for different types of intelligence. It also seems to suggest that intelligence is inherited or genetic, which I suspect doesn’t hold true.

This wasn’t really what Hawking was talking about though: I think he was talking more in cultural terms – as in nurture and not nature.

In other words, I would suggest the problem of intelligence and intellect not being nurtured in broad society. When we get to a point where our societies don’t encourage us to think intelligently or to value intellect – or in fact encourage us to be dumb – our collective intelligence level is inevitably going to go down the toilet.

You even end up in a situation where intellectuals are viewed with suspicion – and, arguably, this is even something actively sought by some politicians, institutions, corporations and media organisations, because the dumber we are, the easier we are to manipulate or lie to.

How much of Professor Hawking’s fears for the future end up being validated remains to be seen. I’m sure he would rather be remembered more for his published works and his rich contribution to scientific thinking, debate and understanding.

I’m a bit embarassed to say that I never got through A Brief History of Time in its entirety (I found Asimov’s and Carl Sagan’s books more accessible): to be fair to myself, I was a teenager when I tried to read it – and I probably need to try again with a (slightly) more grown-up mind.

However, one also hopes that some of his recent warnings or reservations might echo in the minds of institutions, innovators and legislators, as our human and technological journey continues to accelerate at such a rapid pace.

Read more: ‘Transcending the Uncanny Valley: AI & the Societal Landscape‘, ‘Cheating Death: Could Dinosaurs Be Restored to Life Within 10 Years?‘, ‘Immortality – Would You Want to Live Again?‘

There is a lot of science fiction out there these days and much mental speculation. Implanted souls? Aliens taking the place of God? Alien mania is the new mythos for atheists.

As to deciphering ancient cuneiform texts and any other ancient written records to be found elsewhere on the planet, one must be a little skeptical. First, how can we moderns be sure of the accuracy of the deciphering or the translation into modern languages? Second, do we take these ancient writings at face value, i.e. literally? Many old stories or accounts were allegorical in nature.

Nonexistent aliens also make for useful scapegoats. To cover up secret manipulations.

Centuries ago the secret police would sometimes hide in the attic performing like ghosts.

In our days there is cybernetics, with potential for great good, but where it has been applied in other ways.

So well written and presented 🙂 And I would consider all Hawking’s warnings as being seriously worth considering.

A really good article to read, my earthling friend, thank you!

The warnings of Professor Hawking’s were interesting, and if we look at the way of thinking elites who rule the world, these warnings can lead to new openings in their hands. As follows, for example, his warnings about AI, according to my opinion, the human workers will have to sell the labor much cheaper in the period when the human workers’ jobs will be taken from their hands and get them to the robots (so, when the elites present AI as a threat). Or, maybe will not be cheaper labor, instead of it, it may be that the working conditions without insurance are waiting for the human workers.

By saying, “I have a robot to do this job instead of you, it’s the same cost as the salary I will pay you, and the yearly maintenance costs of the robot are nothing besides your insurance, so our human workers are not covered by insurance” to a construction worker(for example), it is likely that an exploitation order will be created in this way. Even in perhaps 100 years, they can even put this as law in states’ constitutions: “Human labor is uninsured.”

Regarding the destruction of the planet, actually the elites are again in the foreground. When the planet that have ruined by them, and when the elites who have the highest and sweetest abundance/profit from this ruin, will go to the outer space, the planet earth will likely to be used exclusively for mines and underground water resources. And in this case, I think that there will be only poor communities of people who will work in these sectors and have no other hope and chance except staying in the planet earth. This, of course, is my prediction that may be around 200 years future from now.

And by the way, if the extraterrestrials who have the ability to travel between planets come here for the invasion, would this process be interrupted? First of all, due to the reasons you can guess, I do not know how to answer this question. Haha!

If I try to respond in the direction of Hawking’s warnings, for example what did he say: “Humanity should not respond to aliens, in case they accidentally kill us.” My advise to human beings, if that event would happen, the human beings should pretend to be dead. Haha! (I can not avoid myself to make joke.:))

Anyway, kidding aside, is not this a problem what he mentioned: a threat of another species that will kill people, accidentally and collectively or not, will consume planet’s resources, invade the planet and enslave the human beings. But okay, is this problem already happening on the planet earth now? In other words, does not a group of 1% of human race kill the 99% of human race with the wars, pollution that they create, and the unhealthy conditions, foods, drugs? The resources of the planet already are not being consumed by this 1% group? Are not 1% group running the rest 99% as they want, even if not under the name of slavery? The name is not exactly slavery, because there is a fairy tale called “democracy” on this planet. And besides all that, 1% of human race manipulatively does direct the masses as they want. (That, any alien species does not direct masses manipulatively). So does not 1% of human race do much more than a possible alien threat? Then what is its rationale? In the name of protecting its own species, the “alien threat” turns into a propaganda of 1%, if an alien species invade the world.

I even go a step further, and I say that if an alien species comes to the invasion, while 1% human race is provoking to the other 99% of the people against this alien species, on the other side 1% will sit on the deal table with the alien species in the direction of only 1%’s interests. Again 99% will be spent. Even the alien species that will come, can get the shaft suddenly, haha!

Also, have you never heard of her name, Muazzez Ilmiye Cig who is very good in that scientific field (one of the three great sumerologists who are now living)? She is 103 years old, and a very productive person. There are dozens of books of her on the Sumerians that she has taken her research on. If you search from the internet, maybe you can find books translated into English. If you can find it, I especially recommend reading Ilmiye Cıg’s that book: “The Origins of the Koran, the Bible and the Torah in Sumer”.

There are also information about Annunakis you mentioned in her two books. And the mentioned Annunakis were not aliens, but a kind of princes who came from the blood of the king, made the helper god identification (or given the title of helper god to them). It needs to know Sumer religion in order to better understand the subject. According to their religion, there were the divine powers (like many kingdoms in the same human monotheistic religions’ history), and a kind of small god function by the will of Gods and given to these people who have power over the others. As I said if reading their understanding of religion, it can be more clearly. Think of the embossments and the Sumerian inscriptions as a symbol of their religious understanding and were transferred into the writings and picturing with the high imagination, my earthling friend. So they were not aliens, in short they were only humans.

ps:I do not know how quick last three months have passed. 2018 started very speed for me on this planet. You’ve shared in some blog posts last months I have seen. Some of them exactly for me, you know, my earthling friend. 😉 I hope to read them as soon as possible, when I get free time.

No worries, my ET friend – catch up in your own time.

Also, I apologise if I offend your species by talking about dangerous aliens 🙂

You make very good points – and you’re probably right about most of it.

On the subject of Sumer and the Annunaki – no, I haven’t heard of the author you mentioned. The books I read were all from about 15 or 20 years ago. And those authors were all convinced the Annunaki were extra-terrestrial in origin – so I would be interested to read about this different theory you’ve mentioned.

You do hit on a good point that has been obvious for a long time – specifically, that all three Abrahamic religions (Christianty, Islam, Judaism) are easily traced back to Sumer and Babylon: if we can prove that Judiasm is derived entirely from Sumer (and we can), then Christianity and Islam – both derived from Jewish thought – have to be seen the same way too.

If we care to unravel the Sumerian history, then we already know that the aliens of earth are the Earthians (as we call them) who call themselves human but are only pseudo-human. There are ways to discover our past on this world. One way is archaeology and deciphering the cuneiform writing, what’s been salvaged anyway. Earth people, or man, or Earthians are not natural to this world and never evolved as claimed by Darwinists. The creation story is closer to the truth. The Anunnaki invaded earth within the last million years. They certainly left their technological mark everywhere.

Man (Earthians) was designed as a dummied down slave species cloned from Anunnaki and earth proto-human DNA – a hybrid capable of learning, taking orders without question but with a great part of its brain closed off so it could not access it. The creature (bear with me here) was equipped with an implant so it could be controlled and manipulated whichever way. Want a smart one? Tweak the implant. Want to dummy down a whole bunch at a time (think the tower of Babel episode) tweak the implant the other way. Want a brain-dead army to follow a psychopathic leader? More tweaking. Of course it isn’t called “tweaking” – it’s called a number of things, like patriotism or brainwashing or propaganda.

The implant is called the soul. Everyone still gets one because the implanting is programmed to be done automatically and artificially. The process is part of the patriarchal hierarchy and of civilization. Imagine that: the Catholic Church was actually correct when it claimed that certain non-civilized Earthians did not have a soul. Women, it was also claimed, did not have a soul. Excuses for oppression and enslavement? Or actual fact? Seems that to be permitted to rule, you had to have a soul. No wonder women have such a hard time seeking equality within the patriarchy. I’d be willing to bet, if we ever devise a tool (Matrix style!) to pinpoint a soul we’ll discover that those “iron” women (the Thatcher, for example) are implanted whereas the peace makers are not.

Back to Hawking. The problem with high-end scientists is they are too smart for their own good. They can see a situation but they can’t see the forest for the trees. They cannot accept that there is a forest unless they first count the number of tress, then label said trees according to species, etc. By the time they’re done counting, who cares then? Only those who need to read their papers to pass a course to become another tree counter. Scientists, with exceptions, usually cannot blend the quantifiable with the more esoteric awareness. Hawking observed a phenomenon but couldn’t source it. I just hope he’s having a good time partying with Einstein, Tesla, Galileo, DaVinci and a few others. Nuff said.

Really interesting points. I would argue, though, that even the highest-end scientists don’t need to formulate absolute answers to *everything* all at once (i.e: the forest). They’re better off focused on specific trees, so that the rest of us can take their findings about those specific trees and apply it to our perception/understanding of the whole forest.

Or something like that.

“…if we can prove that Judaism is derived entirely from Sumer (and we can), then Christianity and Islam – both derived from Jewish thought – have to be seen the same way too.” You are definitelly right about this, my earthling friend!

And, no, you did not offend me, you can be sure. Even your sayings about this is pale in comparison with WD’s sayings.:)(You know or familiar to him from my(WD and my) blog, he is my dog comrade on this planet).

His greatest passion is to uncover the true face(according to him) of the extraterrestrials, and to unite the earthlings against the future alien invasion. Many times he complained/ still complains about me to the my blog friends. According to him, this is an important duty, and he believes that one day he will drive me into a corner and learn the truths(!) about aliens’s threats. Also, he is fan of Hawking(as you can predict, Hawking’s words about possible aliens’ threats); even, after Hawking’s death, WD declared three days mourning. Yes, that three days I rested my head.:)

There is some probability that after around the mid 1980s there has been a standin for Hawking or a replacement. Daily mail online of 12/13 january 2017 summarises its overview of the concerned conspiracy theories by saying maybe the conspiracy theorists are right this time.

http://www.dailymail.co.uk/femail/article-5261939/Has-Stephen-Hawking-replaced-puppet.html

http://milesmathis.com/hawk3.pdf

Any significant differences between the views and theorizing of the younger and later era Hawking, may be concidered against that background.

And concerning the march into idiocrazy like Larryzb comments the dumbingdown is by design.

petergrafstrm, yes, I had come across that theory that Professor Hawking had actually died years ago and been replaced – but I never really looked into it much as it sounded silly to me.

Nevertheless, thanks for providing links, so that others can check it out themselves.

The fact that people are being dumbed down is no accident – it is by design. The schools are now institutions of indoctrination.

Hawking’s warning of perhaps only another 100 years or so for humanity may turn to be quite correct. Overfishing has depleted fish stocks around the planet, and if not stopped and reversed will result in a largely lifeless ocean by mid century (2050). That is the latest warning we just saw this past week. The hour is late.

Appropriate thoughts here… you may want to change “omnipotent” (all powerful) to “omniscient” (all knowing)…